By Monica Varela, Chief Interpreter, ILO

You are in a small, glass-fronted booth, earphones secure, microphone ready, laptop and screens switched on. You see rows of delegates down below but they barely notice you are there. One begins to speak. You listen, process the meaning, interpret into your target language with the mic on, while listening and processing the next segment of information – all this continuously and with a delay of just a few seconds.

The same scene is repeated in the row of booths next to you – in English, French, Spanish, Russian, Arabic, Chinese and German. In half an hour, to limit the stress on the brain, you will be relieved by another colleague who will repeat the sequence for another 30 minutes until it is time for you to return.

Simultaneous interpretation has become such a staple of international meetings worldwide that it’s easy to forget the almost invisible women and men behind the glass screens who have become an essential cog in the multilateral machinery.

It’s not just about translating words. It’s about inferring meaning. And by so doing, creating a level playing field that allows delegates to express themselves in their own language. This is therefore also about democracy and justice.

Many people associate the development of simultaneous interpreting with the Nuremberg trials that took place after the Second World War. In fact, it was the ILO – under its first Director, Albert Thomas – that gave birth to the concept.

Until the mid-1920s, interpretation at all ILO meetings was done consecutively. The speaker would say a few words and pause to allow the interpreter to repeat in the receiving language. It was slow and cumbersome, even though at that time, interpretation was done only in English and French.

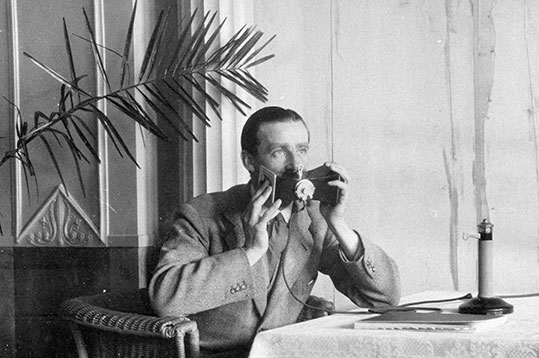

American businessman and philanthropist, E. A. Filene, was an employer delegate at the International Labour Conference of 1926. That year he began a collaboration with A. G. Finlay, an engineer and temporary translator at the ILO that led to the creation of the first telephonic system for interpretation, known as the IBM Hushaphone System Filene-Finlay. It resembled an old-fashioned Edwardian telephone with stand and earpiece, with a curved box-shaped attachment at the top to speak into.

The Hushaphone was trialled for the first time at the 1927 International Labour Conference. Soon after, its use expanded rapidly, including at the League of Nations. Since then, simultaneous interpretation has evolved and these days uses the latest technology. It’s also become a recognized profession, which has spawned a vibrant community of practitioners, researchers and trainers.

In the 1970s, a number of health problems associated with simultaneous interpretation began to be recognized, including alcoholism, sleep deprivation and anxiety. This has led to a number of scientific studies on the impact on the brain of listening to, processing and interpreting speeches and interventions delivered at between 130 to 180 words a minute. As a result, specific rules were agreed on the working conditions of simultaneous interpreters, including working time and rest periods.

This week the ILO and the University of Geneva, a world-leader in the teaching of simultaneous interpretation, are celebrating 100 years of conference interpreting.

It’s an opportunity to take stock of the achievements of the past and to discuss the challenges of the future.

As we go forward, a question interpreters are often asked is whether machines will take over our jobs. While there is huge potential for machines to assist simultaneous interpreters, I believe the need for humans to make sense of and give meaning to the spoken word – in whatever language – will remain.

Thank you for sharing this article! I learnt something new today about this great organization although I worked with the ILO previously. Cheers to Messieurs Filène and Finlay for their important invention!